A landmark study from Google Research, Google DeepMind, and MIT -- Towards a Science of Scaling Agent Systems -- just confirmed what we have been saying at Hendricks: when it comes to AI, architecture beats tools every time.

The research team ran 180 controlled experiments across three major AI model families (OpenAI, Google, Anthropic), four benchmarks, and five different agent architectures. Their conclusion challenges the prevailing industry narrative that throwing more AI agents at a problem automatically produces better results.

It does not. And for service-intensive companies investing in AI operations, this distinction is worth millions.

The “More Agents Is Better” Myth

The AI industry has been operating on a seductive assumption: more agents is all you need. Need better financial analysis? Add more agents. Need faster workflows? Spin up a bigger team of AI workers.

Google’s research dismantles this. Across all benchmarks and architectures, the overall mean performance improvement from multi-agent systems was -3.5% -- that is a decline, not a gain. The variance was enormous: from +81% improvement on structured financial analysis tasks down to -70% degradation on sequential planning tasks.

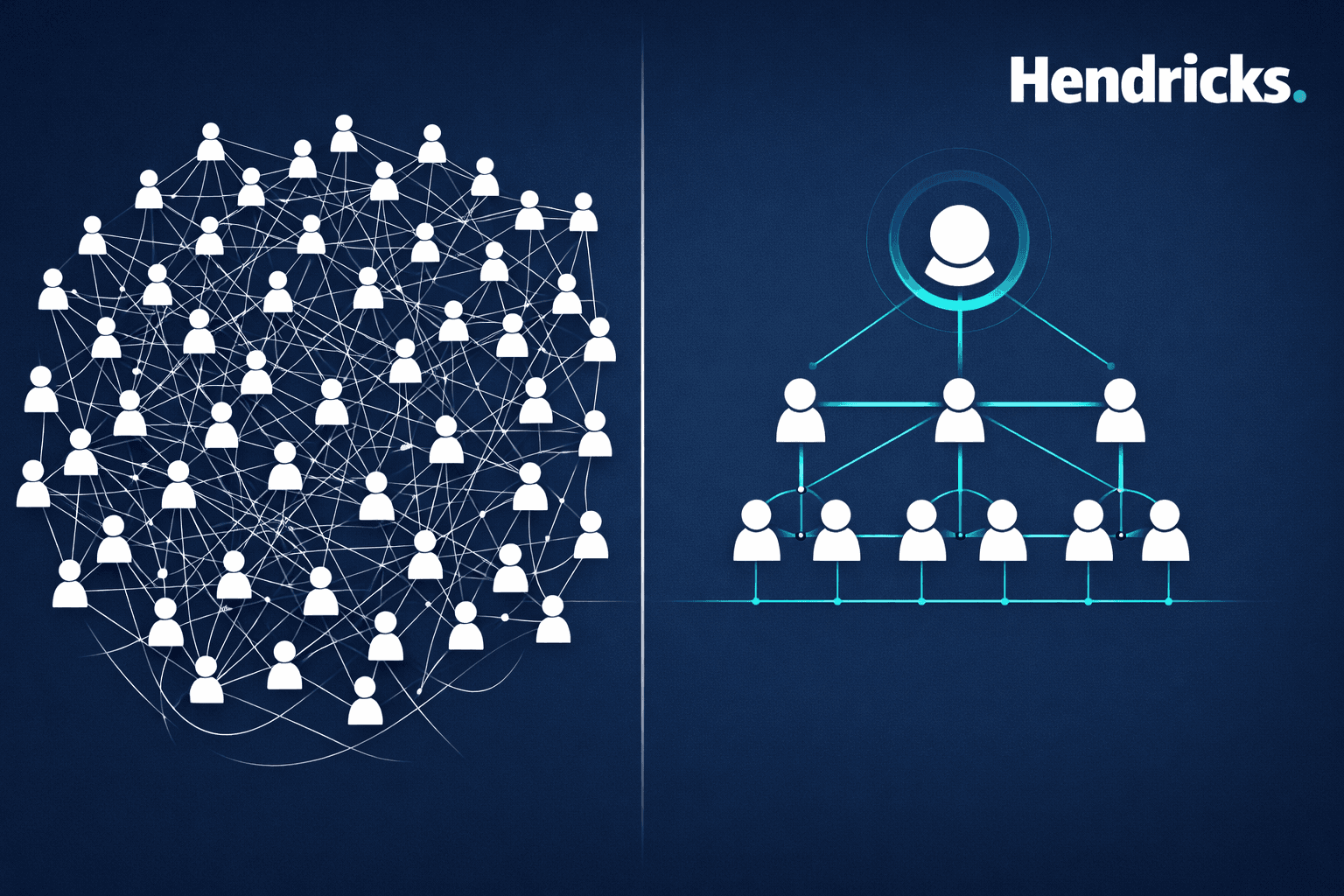

The difference was not the number of agents. It was the architecture.

Three Scaling Principles Every Operations Leader Should Know

1. The Tool-Coordination Trade-Off

When tasks involve many tools and complex environments, multi-agent coordination overhead actually fragments the computational budget. Each agent gets less capacity for real reasoning because tokens are consumed by inter-agent communication instead.

In practical terms: if your operation requires a single workflow touching 16 different tools -- your CRM, ERP, email system, analytics platform, and so on -- adding more agents makes it worse, not better. A well-architected single-agent system outperforms a multi-agent team on tool-heavy sequential work.

2. The Capability Ceiling

When a single AI agent already performs above approximately 45% accuracy on a task, adding more agents produces diminishing or negative returns. The coordination costs exceed the marginal improvement potential.

The implication for service-intensive operations: before investing in complex multi-agent orchestration, measure your single-agent baseline. If it is already performing well, your investment should go toward better architecture and process design -- not more agents.

3. Architecture-Dependent Error Amplification

This finding is critical. Independent agent systems -- where multiple agents work in isolation and their outputs are simply aggregated -- amplify errors by 17.2x. Errors made by one agent cascade unchecked through the entire output.

Centralized architectures, where an orchestrator validates sub-agent work before aggregation, contain this to 4.4x. The presence or absence of verification bottlenecks -- an architectural decision -- is the difference between a system that self-corrects and one that compounds mistakes.

When Multi-Agent Systems Actually Work

The research is not anti-multi-agent. It is anti-heuristic. Multi-agent systems deliver real value when the architecture matches the task structure:

Structured, decomposable work (+81% improvement): Financial analysis, where revenue trends, cost structures, and market comparisons can be analyzed independently by separate agents and then synthesized by an orchestrator. Centralized coordination improved performance by 80.9% over single-agent baselines.

Dynamic, exploratory work (+9.2% improvement): Web navigation and information retrieval, where decentralized agents exploring in parallel outperformed centralized control. The search space benefits from distributed exploration.

Sequential, state-dependent work (-39% to -70% degradation): Planning tasks where each step depends on the previous one -- like inventory management or multi-step compliance workflows. Every multi-agent variant degraded performance because coordination overhead fragments reasoning capacity on work that is inherently serial.

The Architecture Selection Framework

The researchers derived a predictive model that correctly selects the optimal agent architecture 87% of the time, based on measurable task properties -- not intuition. Three task archetypes emerged:

Planning tasks (few tools, high baseline performance, sequential dependencies) favor single-agent systems. Analysis tasks (moderate tools, lower baseline, decomposable subtasks) favor centralized multi-agent architectures. Tool-heavy tasks (many tools, high parallelization potential) favor decentralized multi-agent approaches.

The critical decision boundary: if your single-agent baseline already exceeds approximately 45% performance, multi-agent coordination is unlikely to help. Invest in better process orchestration instead.

What This Means for Service-Intensive Operations

For service-intensive businesses where growth is bottlenecked by operations -- the organizations Hendricks serves -- these findings translate to three actionable principles:

Diagnose Before You Deploy

The research confirms that task decomposability, not task difficulty, determines whether multi-agent systems help. Before deploying any AI agent system, map your workflows: which processes have independent, parallelizable subtasks? Which are strictly sequential? The architecture must match the work.

Architecture Over Agents

Adding agents to a poorly architected system is like adding employees to a broken process -- you get more activity but worse outcomes. The study found that the coordination structure (centralized, decentralized, hybrid) explained more performance variance than the number of agents or even the capability of the underlying AI model.

Measure the Coordination Tax

Every multi-agent system introduces overhead: 58% to 515% more computational cost than single-agent baselines, depending on architecture. The research identified an optimal band of 200-300% overhead where coordination mechanisms are fully engaged but not yet counterproductive. Beyond 400% overhead, you are in over-coordination territory where protocol complexity itself becomes a failure mode.

The Bottom Line

The science is clear: multi-agent AI performance is governed by quantifiable trade-offs between architectural properties and task characteristics. “More agents” is a heuristic. Architecture selection based on measurable task properties is engineering.

This is precisely why Hendricks approaches AI implementation as an architecture problem, not a tools problem. The right architecture compounds performance. The wrong one compounds costs.

The question is not “how many AI agents do we need?” It is “what does our operating architecture need to look like for AI to actually work?”

Multi-agent AI performance ranges from +81% to -70% depending on architecture-task alignment. The difference is not the number of agents -- it is the architecture. Architecture over tools. Systems over tasks. Results over hype.

Hendricks designs, installs, and operates intelligent operating architecture for service-intensive businesses. If your AI investments are not delivering expected returns, the problem is likely architectural. Start a conversation about what the right architecture looks like for your organization.

Source: Kim, Y., Gu, K., Park, C., et al. “Towards a Science of Scaling Agent Systems.” Google Research, Google DeepMind, and MIT. arXiv:2512.08296v2, December 2025.

Frequently Asked Questions

Do more AI agents improve performance?

Not necessarily. Research from Google, DeepMind, and MIT across 180 configurations found the overall mean improvement from multi-agent systems was -3.5%. Performance ranged from +81% on decomposable financial analysis to -70% on sequential planning tasks. The architecture, not the number of agents, determines outcomes.

When do multi-agent AI systems work best?

Multi-agent systems excel on structured, decomposable tasks where subtasks can be analyzed independently and synthesized by an orchestrator. Financial analysis saw +81% improvement with centralized coordination. They degrade on sequential tasks where each step depends on the previous one.

What is the tool-coordination trade-off in AI agent systems?

When tasks involve many tools, multi-agent coordination overhead fragments the computational budget. Each agent gets less capacity for reasoning because resources are consumed by inter-agent communication. For tool-heavy sequential workflows, a single well-architected agent outperforms multi-agent teams.

How do you choose the right AI agent architecture?

Architecture selection should be based on measurable task properties: task decomposability, tool count, and single-agent baseline performance. Planning tasks favor single-agent systems, analysis tasks favor centralized multi-agent, and tool-heavy parallelizable tasks favor decentralized multi-agent approaches. A predictive model achieves 87% accuracy in selecting the optimal architecture.

What is the capability ceiling for multi-agent AI systems?

When a single AI agent already performs above approximately 45% accuracy on a task, adding more agents produces diminishing or negative returns. The coordination costs exceed the marginal improvement potential. Investment should go toward better architecture and process design instead.